SQL as the main ETL language

Speaking of data development, we have seen various programming languages being used.

Some team will choose python for it’s simplicity and for the great pandas library. Other team will choose Scala if they are using Spark. Others may try Spark DataFrame API etc.

But, after we tried in several data projects, we found it may be better to choose SQL as the main ETL language. The reasons behind the suggestion are:

- SQL is a declarative language, so it’s easy to understand and learn.

- SQL is designed for data related calculation, so it has native support for parallel computing.

- Almost every data framework has good support for SQL, e.g. Hive/Spark/Flink etc.

- SQL is understandable for other roles in the team. Not only the developers understand the data calculation logic, but also the data analyst, quality assurer, and even business person.

Using SQL as main ETL language helps greatly with knowledge sharing in the team, which is very important for data projects.

Drawbacks of SQL

SQL is designed to be used in a declarative way and it causes a few troubles when we use SQL to develop complicated ETL.

Think about the following cases.

- We would like to use large computing resources when we’re handling data in the full-data partition since the amount of data there is far larger than that in the other partitions.

- We would like to send out a HTTP request to report status when some step of the ETL fails for some reasons(E.g. some data does not conform to the previous assumptions).

- We would like to reuse some code to check if some order is a valid order (think about e-commerce business).

- We would like to stop at some step of the ETL and check if the data is what we expected.

When we use SQL to develop our ETL, it is hard to handle the above cases. But for a company with a wide range of data usage, there are similar cases everywhere.

SQL with imperative characteristics

Why it is hard to handle the above cases? A main cause is the declarativity of SQL.

For a declarative programming language, we finish a task by design the solution first and then execute the solution in one action.

It works like:

1 | Will do A. |

This way, code may be easier to write. But it’s hard to get the result of some step and do the following things conditionally.

The opposite way of coding is the imperative way, which is much more widely used in general programming language.

It works like:

1 | Do A. |

In this way, it’s easy to get the result of some step and do some following things conditionally.

Declarativity is fantastic in data processing domain. But imperativity is also required when we have complicated logic in our ETL.

SQL with general programming ability

Besides the imperative characteristics, we still need to handle other things in ETL. As mentioned in the above cases that SQL looks hard to handle, we can see that some general programming ability is also required.

These general programming ability could include:

- Sending HTTP request.

- Logging.

- Debugging.

- Code reusing.

- …

A new ETL language based on SQL: Easy SQL

We discussed a lot about ETL programming above. It more and more leads to a new ETL language. The new ETL language is based on SQL, but with support of imperative characteristics and general programming ability.

From a couple of client projects, and after a long time practicing, we finally created a tool (also is a library) named Easy SQL.

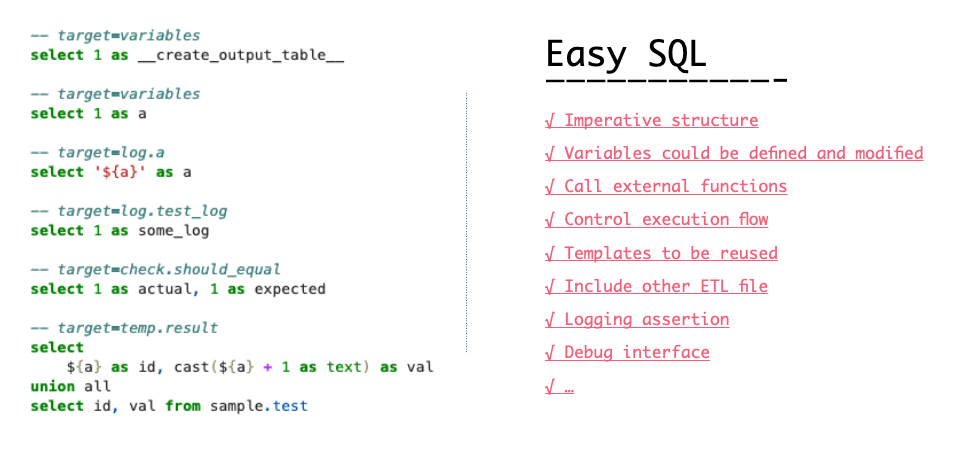

Easy SQL can be viewed as an enhanced SQL used for ETL programming. It provides the following language features:

- An imperative structure of ETL code.

- Variables which could be defined and modified any time.

- A way to call external functions.

- A way to control whether a step should be executed.

- Templates that could be reused in the same ETL file.

- Include command that could be used to reuse code at file level.

- Logging and assertion that could be used for debugging.

Easy SQL provides a light-weight engine to handle these language features, some simple APIs to let programmers interact with the engine programmatically, and a few useful tools to help developing in Easy SQL.

These includes:

- Easy SQL Engine API.

- An ETL runner.

- A debugger interface to use in Jupyter (or any other interactive command line shell) for debugging in Easy SQL.

- A simple design to let programmers create and maintain ETL tests.

- A tool to run tests.

Since the language features provided by Easy SQL are SQL agnostic, any SQL engine could be plugged-in as a backend. There is built-in supported for several popular SQL engines, including SparkSQL, PostgreSQL, Clickhouse, Aliyun MaxCompute, Google BigQuery. More will be added in the near future.

Design principles in Easy SQL

When first tried to design Easy SQL, we found several important things. Which are:

- Keep compatible with standard SQL. So that every SQL editor could be used to develop in Easy SQL.

- Try to use SQL-way to implement most of the features.

- Use intuitive syntax which is also similar to the widely-used syntax in other programming languages.

- Implement widely-used debugging features, such as logging and asserting and even step by step debugging.

These important things become the design principles of Easy SQL. They provide guidance in the whole design process.

We open sourced Easy SQL

Finally, for anyone who is interested in data processing and ETL developing, we’re happy to say that we open sourced Easy SQL on GitHub at: https://github.com/easysql/easy_sql.

If you just want to have a try, please follow the README documentation in the GitHub repository. And the detailed documentation at https://easy-sql.readthedocs.io/en/latest/easy_sql/easy_sql.html may also help a lot.

Easy SQL is still under active development. If you have any good ideas, please raise an issue to talk about it. If you want to know the details about implementation, please just read the code.

Please give us star if you like it! Also looking forward for you to have a try and raise any possible issues. And PRs are welcomed!